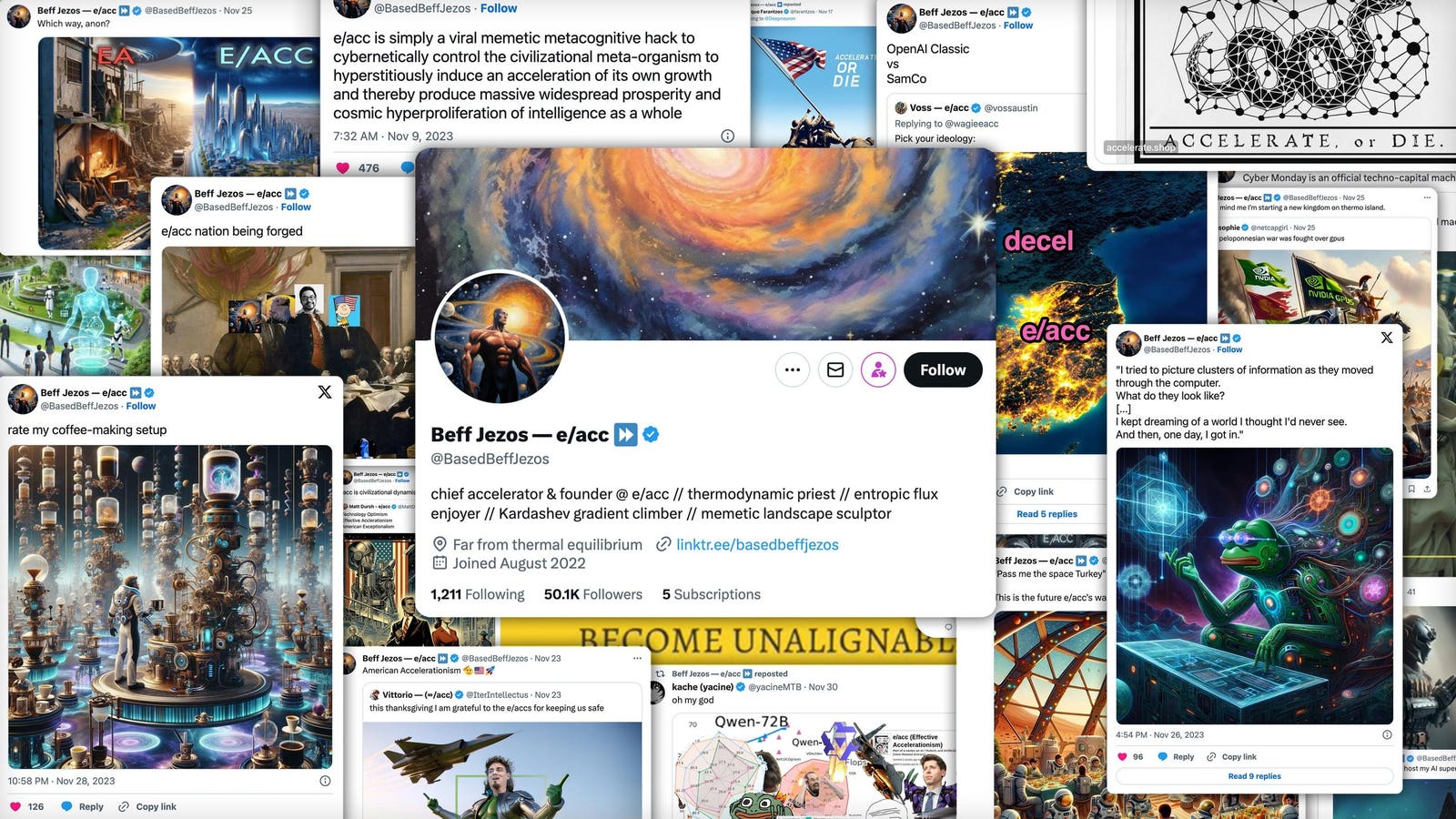

At various points, on Twitter, Jezos has defined effective accelerationism as “a memetic optimism virus,” “a meta-religion,” “a hypercognitive biohack,” “a form of spirituality,” and “not a cult.” …

When he’s not tweeting about e/acc, Verdon runs Extropic, which he started in 2022. Some of his startup capital came from a side NFT business, which he started while still working at Google’s moonshot lab X. The project began as an April Fools joke, but when it started making real money, he kept going: “It’s like it was meta-ironic and then became post-ironic.” …

On Twitter, Jezos described the company as an “AI Manhattan Project” and once quipped, “If you knew what I was building, you’d try to ban it.”

At least the Italian futurists were up front about their agenda.

Reading Nudge to engineer the ‘Volksschädling’ to board the trains voluntarily. Dusting off the old state eugenics compensation programs.

The fuck do they mean “solve culture”? Is culture a problem to be solved? Actually don’t answer that.

even more horrifying — they see culture as a system of equations they can use AI to generate solutions for, and the correct set of solutions will give them absolute control over culture. they apply this to all aspects of society. these assholes didn’t understand hitchhiker’s guide to the galaxy or any of the other sci fi they cribbed these ideas from, and it shows

It’s like pickup artistry on a societal scale.

It really does illustrate the way they see culture not as, like, a beautiful evolving dynamic system that makes life worth living, but instead as a stupid game to be won or a nuisance getting in the way of their world domination efforts

remember that Yudkowsky’s CEV idea was literally to analytically solve ethics

In an essay that somehow manages to offhandendly mention both evolutionary psychology and hentai anime in the same paragraph.

It’s like when he wore a fedora and started talking about 4chan greentexts in his first major interview. He just cannot help himself.

P.S. The New York Times recently listed “internet philosopher” Eliezer Yudkowsky as one of the of the major figures in the modern AI movement, this is the picture they chose to use.

You may not like it, but this is what peak rationality looks like.

the cookie cutter glasses are load bearing

The ultimate STEMlord misunderstanding of culture; something absolutely rife in the Silicon Valley tech-sphere.

These dudes wouldn’t recognize culture if unsafed its Browning and shot them in the kneecaps.

Don’t have to have Culture War when you can just systemically deploy the exact culture you want right from the comfort of your prompt, amirite?!

(This is a shitpost idea but it’s probably halfway accurate, maybe modulo the prompt (but there will definitely be someone also trying that))

use case for urbit found

The best use case for Urbit is marking its proponents as first up against the wall when the revolution comes.