This is the best summary I could come up with:

“Manipulation of human likeness and falsification of evidence underlie the most prevalent tactics in real-world cases of misuse,” the researchers conclude.

“Most of these were deployed with a discernible intent to influence public opinion, enable scam or fraudulent activities, or to generate profit.”

Compounding the problem is that generative AI systems are increasingly advanced and readily available — “requiring minimal technical expertise,” according to the researchers, and this situation is twisting people’s “collective understanding of socio-political reality or scientific consensus.”

If you read the paper, you can’t help but conclude that the “misuse” of generative AI often sounds a lot like the tech is working as intended.

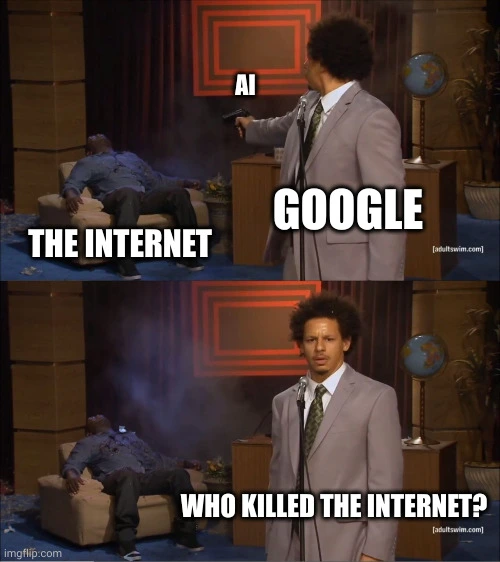

“Likewise, the mass production of low quality, spam-like and nefarious synthetic content risks increasing people’s scepticism towards digital information altogether and overloading users with verification tasks,” they write.

And chillingly, because we’re being inundated with fake AI content, the researchers say there have been instances when “high profile individuals are able to explain away unfavourable evidence as AI-generated, shifting the burden of proof in costly and inefficient ways.”

The original article contains 411 words, the summary contains 175 words. Saved 57%. I’m a bot and I’m open source!