Need to let loose a primal scream without collecting footnotes first? Have a sneer percolating in your system but not enough time/energy to make a whole post about it? Go forth and be mid: Welcome to the Stubsack, your first port of call for learning fresh Awful you’ll near-instantly regret.

Any awful.systems sub may be subsneered in this subthread, techtakes or no.

If your sneer seems higher quality than you thought, feel free to cut’n’paste it into its own post — there’s no quota for posting and the bar really isn’t that high.

The post Xitter web has spawned soo many “esoteric” right wing freaks, but there’s no appropriate sneer-space for them. I’m talking redscare-ish, reality challenged “culture critics” who write about everything but understand nothing. I’m talking about reply-guys who make the same 6 tweets about the same 3 subjects. They’re inescapable at this point, yet I don’t see them mocked (as much as they should be)

Like, there was one dude a while back who insisted that women couldn’t be surgeons because they didn’t believe in the moon or in stars? I think each and every one of these guys is uniquely fucked up and if I can’t escape them, I would love to sneer at them.

(Semi-obligatory thanks to @dgerard for starting this)

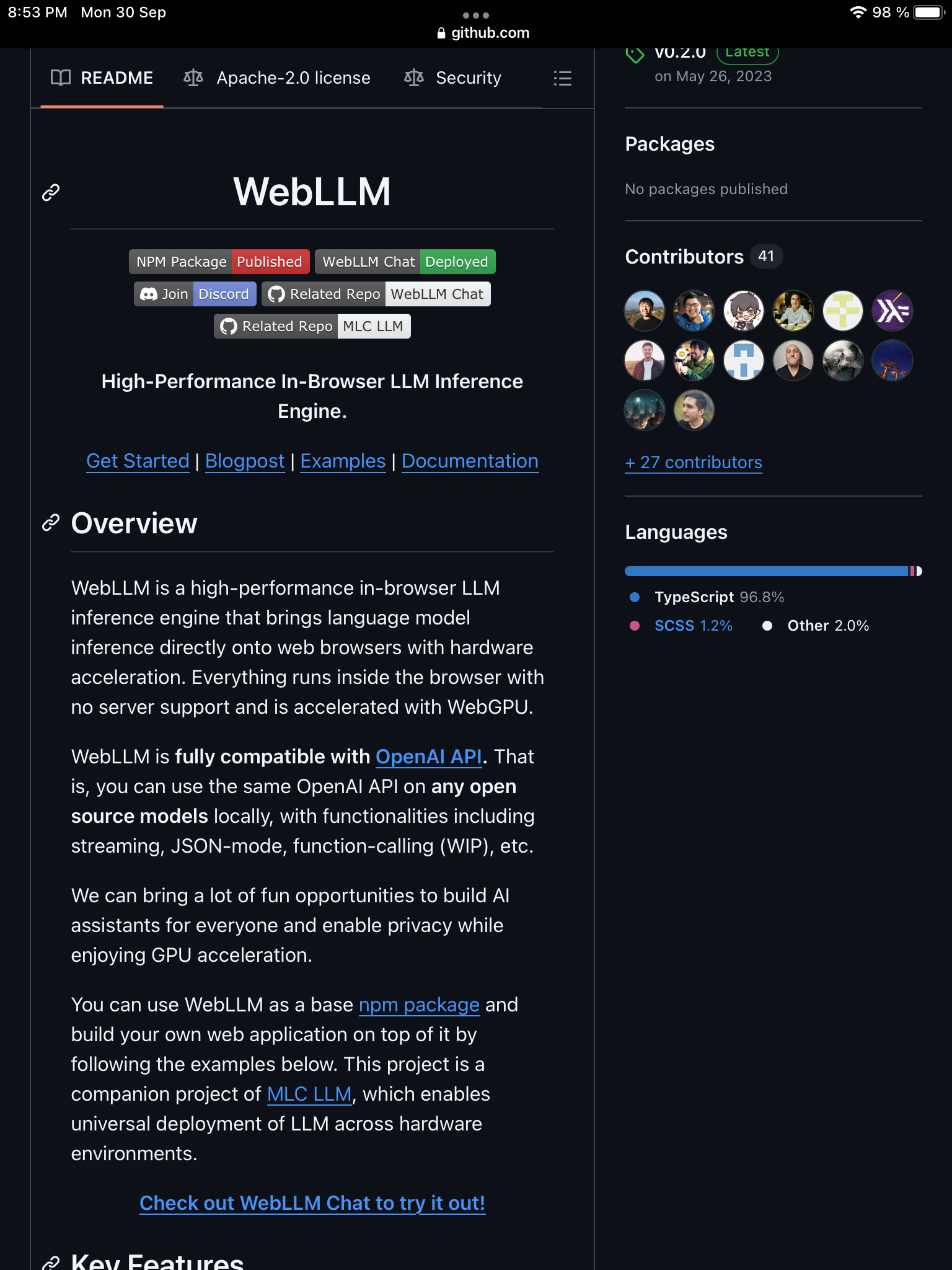

I might be wrong but this sounds like a quick way to make the web worse by putting a huge computational load on your machine for the purpose of privacy inside customer service chat bots that nobody wants. Please correct me if I’m wrong

I do believe that’s the chrome-only horseshit that Proton uses for their local LLM, and reputedly it’s very slow and fairly unreliable

The whole concept of responsive really died in the arse with the onset of the full stack web developer.

the web platform is great!

Did you ever see that A16z funded startup that was making a web browser that streamed the web for an intermediary server? It was fucken wild

Edit, I guess it was yc funded? https://jacobhrussell.com/blog/mighty

oh it’s the web operating system again! things that make a web browser an operating system:

One other thing, also correct me if I’m wrong, but isn’t it a giant key logger as well?

…huh, that is true! so another bullet point and this one’s shared by more than one “web operating system”:

in this case it’s pretty bad, cause it’s got the same issue as all hosted VMs in that if the host or hypervisor is compromised so are all the VMs, but also effectively anyone on Mighty’s side with access to the event stream would have enough data to compromise your entire existence

Haha no way! Turns out the founder pivoted to AI 5 months after that article was published https://xcancel.com/suhail/status/1591813110230568963

I read this twice as LLM interference engine and was hoping for something like SETI or Folding@Home except my computer could interfere with ChatGPT somehow.